he Internet of Things (IoT) is quickly being adopted for use in mission-critical applications for several reasons. First, the IoT now incorporates increasingly sophisticated technologies, such as artificial intelligence (AI), augmented reality, edge computing, sensor fusion, and mesh networking to tackle problems of increasing difficulty and importance. Second, as recent supply chain challenges have demonstrated, margins for error and delay are slim at best. Third, the demand for increased healthcare, combined with resource scarcity, means many medical services must decrease in cost and become more efficient. Finally, the desire to conserve resources means devices must last longer and perform more reliably.

These trends present numerous business opportunities in fields that serve human health, safety, food production, environmental protection, and other key aspects of human flourishing. As technical challenges grow, each of the 5 Cs + 1 C of the IoT becomes more important. Some of these can use artificial intelligence (AI) as part of the solution.

- Connectivity—Refers to a device’s ability to create and maintain reliable connections, even during roaming. Mission-critical applications cannot accept delayed or lost data.

- Compliance—Means a device meets regulatory requirements for market access. Compliance problems must not delay implementation or lead to a product recall.

- Coexistence—A device’s ability to perform properly in crowded RF bands. Mission-critical devices must avoid packet loss, data corruption, and retries that drain battery charge.

- Continuity—The ability of a device to operate without battery failure. Manufacturers must ensure long battery life, especially in implanted devices and emergencies where AC power is unavailable.

- Cybersecurity—IoT devices and infrastructure must be strong and resilient against cyber threats, including denial of service, contaminated data, or interception of sensitive information. Product development teams can use AI to simulate a variety of malware techniques based on exploits that have revealed vulnerabilities in the past.

- Customer Experience—Ideally, this means that customers enjoy a flawless, optimized experience with intuitive applications that operate seamlessly from end to end on multiple platforms. The challenge is that the number of possible paths through a series of related software applications is virtually limitless, far too many to test comprehensively. Fortunately, AI can once again guide automated test systems based on how recently code has been added, how many defects have been found in particular code sections, and other pertinent factors.

Furthermore, operating environments are increasingly challenging, with temperature changes, irregular duty cycles, and electromagnetically crowded spectrum. Some operate in locations that are difficult or hazardous to access, and some operate inside animal or human bodies. These factors place unprecedented demands on device batteries.

For medical devices, the quality of a device’s battery life often has health implications. Even in non-critical applications, premature failure can lead to complaints in post-market surveillance monitored by regulatory agencies. Complaints that become excessive or increase patient risk can have huge costs for the manufacturer.

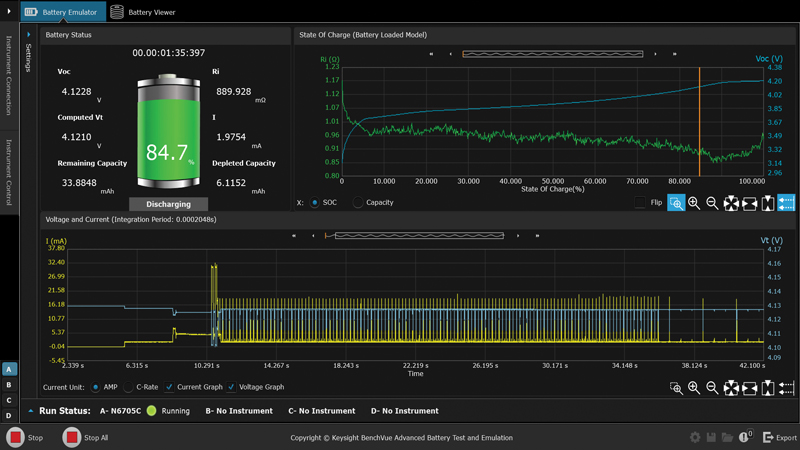

Battery emulation is especially important when the test engineer is changing the device’s hardware configuration or firmware program. Without a consistent battery emulation, the engineer cannot know whether the variation in run-down time is due to the intentional modifications or variability in the batteries used to perform the run-down test, as described above. Because battery life is closely related to the other “Cs” of the IoT, any AI techniques that improve overall device operation can also have a positive impact on battery life.

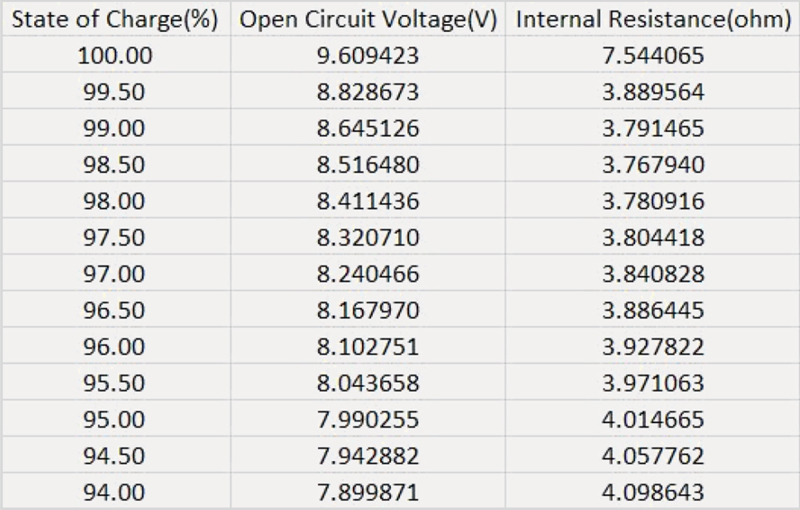

By using such a profile with a battery emulator, the engineer can avoid needing to use an actual battery, thereby eliminating the associated uncertainty and variability. In addition, a battery emulator lets the user quickly set the SoC to any point in the model at the beginning of a test.

For example, the engineer may want to see how the device behaves near the end of battery life by starting the test with the SoC set to 15%. To use an actual battery, one would have to discharge an actual battery to 15% and verify that it was at that level. This poses at least three challenges. First, one would have to discharge a battery to the desired SoC. This could take hours on a real battery, but one can set the SoC on a battery emulator in a fraction of a second. Second, the engineer would have to somehow determine the SoC of the battery. Third, every time you charge or discharge a battery, you change the battery behavior due to the cycle fade mentioned previously.