n the 21st century, artificial intelligence (AI) technology has been driving a paradigm shift in defense, particularly by significantly enhancing operational capabilities in the air force domain. AI-powered combat systems support a wide range of functions such as target detection, tactical decision-making, situational awareness, and autonomous flight, thereby reducing the cognitive burden on human pilots and improving real-time responsiveness and survivability. However, the increasing autonomy of AI systems introduces complex challenges, including potential violations of international law, decision-making errors, and ambiguous attribution of ethical responsibility. This has led to a growing need to redefine the role of human fighter pilots within AI-integrated operations. To address these issues, this study proposes a quantitative ethical decision-making model that mathematically integrates national military ethics principles and international legal norms, while incorporating dynamic battlefield variables. The proposed model aims to contribute to defense policy development and combat training systems by offering a structured and operationally applicable ethical evaluation framework.

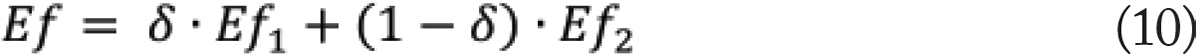

Additionally, 1,000 sets of battlefield situation variables were sampled from a normal distribution with a mean of μ=0.5\mu = 0.5μ = 0.5 and standard deviation σ=0.1. The national weighting factor (δ) was discretized into seven levels. These settings were used to conduct a sensitivity analysis of the proposed ethical evaluation functions across all scenario conditions.

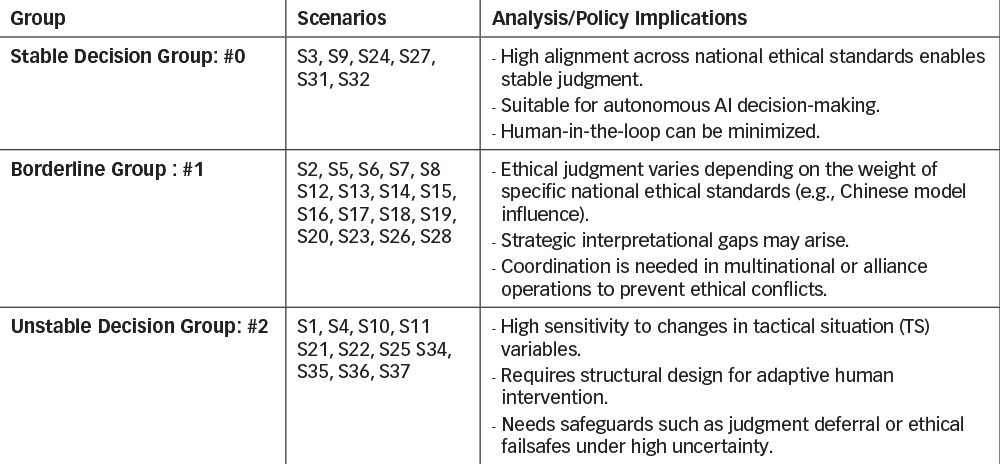

This instability appears to stem from either a low weighting of tactical variables such as Situational Awareness, Time Criticality, Survivability, and Coalition Compatibility or conflicts between those variables, which hinder the model’s ability to maintain coherent ethical judgments. In particular, scenario S29 presents a situation where both Time Criticality and Coalition Compatibility are simultaneously emphasized under different ethical principles. This creates a conflict in prioritization, making it difficult for the AI system to determine which standard should take precedence. As a result, Ef2 demonstrated high sensitivity, where even small variations in the input conditions led to significant changes in the ethical evaluation outcome. In the case of Ef2, scenarios S35, S37, and S19 also exhibited notable variability in ethical evaluation outcomes.

These scenarios represent situations in which priority among LOAC principles—such as Distinction, Unnecessary Suffering, and Proportionality—is ambiguous or context-dependent. As a result, the model demonstrated heightened sensitivity in the presence of legal standard conflicts. These findings suggest that while the designed model provides stable and reliable judgments in most scenarios, interpretational conflicts among ethical principles or imbalanced influence among tactical variables can lead to instability in decision outcomes. Therefore, when considering real-world deployment, it is essential to incorporate supplementary algorithms, decision deferral mechanisms, or adaptive weight adjustments to address such high-sensitivity scenarios.

This suggests heightened sensitivity to situational factors and the possibility of varying interpretations of ethical standards depending on context Third, the Unstable Decision Group (#2) is characterized by dominant α, suppressed β and γ values, and high standard deviations. These indicate the presence of ethical judgment conflicts and potential trade-offs between tactical variables. Such scenarios are high-risk and require mandatory human intervention in the ethical decision-making loop. This classification quantitatively demonstrates how the ethical evaluation structure of an AI combat system can vary depending on the operational scenario. It also serves as a framework for determining appropriate levels of automation, human involvement, and ethical risk for each scenario group. Moving forward, this categorization provides practical guidance for policy development, training system design, and the tailored application of ethical models according to scenario-specific characteristics.

However, the model still exhibited relatively high variance in certain scenarios. These cases suggest residual sensitivity due to conflicts or imbalances among tactical variables, LOAC factors, and national ethical standards. Such instability was most pronounced when multiple contextual variables conflicted simultaneously or when ambiguity in the interpretation of ethical principles was present, reducing the consistency of judgment outcomes.

The proposed framework overcomes the limitations of conventional declarative ethical standards by offering a quantitative, context-aware evaluation structure applicable to real-world operational environments, thereby laying the foundation for practical deployment and policy formulation of AI combat systems. Simulation results revealed that the ethical function responded differently depending on national values and operational contexts, and that stability and sensitivity varied across scenarios.

Particularly, scenario clustering analysis identified both stable types suitable for automated ethical judgment and high-risk types requiring human oversight. These findings underscore the necessity of context-specific, adaptive ethical system design for AI weapons systems.

For future research, several directions are proposed. First, expanding the range of tactical scenarios and incorporating multinational ethical standards will improve the accuracy and adaptability of integrated ethical models across various national and allied military forces. Second, the development of real-time decision-making algorithms, grounded in the proposed ethical function models, is essential for practical implementation in AI combat systems and training environments. Third, addressing ethical conflicts requires the design of dynamic priority adjustment mechanisms, potentially through rule-based approaches or reinforcement learning techniques. Lastly, in high-risk scenarios that necessitate human oversight, the integration of human-in-the-loop interfaces and decision-support systems will be critical to ensuring safe and effective human-AI collaboration.

This research holds both academic significance and strategic value in that it mathematically establishes ethical robustness for AI weapon systems and demonstrates its applicability in real-world military contexts. We anticipate that future efforts will extend this work toward practical implementation and global consensus-building in the domain of military AI ethics.

- J. Jae-Gyu, “A Study on Defense Artificial Intelligence Ethics,” Defense Policy Research, vol. 39, no. 1, Korea Institute for Defense Analyses, 2023, pp. 213–240

- H. Won-Jung, “Military Use of Artificial Intelligence from the Perspective of the Nature of War,” Korean Journal of Military Studies, vol. 76, no. 3, Hwarangdae Institute, 2020, pp. 31–59

- L. Sang-Hyung, “Is Ethical Artificial Intelligence Possible? – Moral and Legal Responsibility of AI,” Law and Policy Studies, vol. 16, no. 4, 2016, pp. 283–303.

- K. Yong-Sam, “Vision and Operational Environment for the Army’s Drone-Bot Combat System,” Defense & Technology, no. 477, 2018, pp. 50–59.

- K. Seung-Rae, “Legal Issues and Prospects in the Era of the 4th Industrial Revolution and AI,” Law Research, vol. 19, no. 2, 2018, pp. 1–30.

- International Committee of the Red Cross (ICRC), “The Ethical Challenges of AI in Military Decision Support Systems,” ICRC Law & Policy Blog, Sep. 2024.

- C. Batallas, “When AI Meets the Laws of War,” IE Insights, Oct. 2024. [Online].

- Z. Stanley-Lockman, “Responsible and Ethical Military AI,” Center for Security and Emerging Technology (CSET), Aug. 2021. [Online]

- M. Anneken, N. Burkart, F. Jeschke, A. Kuwertz-Wolf, A. Mueller, A. Schumann, and M. Teutsch, “Ethical Considerations for the Military Use of Artificial Intelligence in Visual Reconnaissance,” Feb. 2025. [Online].

- D. Trusilo and D. Danks, “Commercial AI, Conflict, and Moral Responsibility: A theoretical analysis and practical approach to the moral responsibilities associated with dual-use AI technology,” Jan. 2024. [Online].

- Zurek, J. Kwik, and T. van Engers, “Model of a military autonomous device following International Humanitarian Law,” Ethics and Information Technology, vol. 25, art. 15, Feb. 2023

- M. Anneken, N. Burkart, F. Jeschke, A. Kuwertz-Wolf, A. Mueller, A. Schumann, and M. Teutsch, “Ethical Considerations for the Military Use of Artificial Intelligence in Visual Reconnaissance,” Feb. 2025. [Online].

- Z. Stanley-Lockman, “Responsible and Ethical Military AI,” Center for Security and Emerging Technology (CSET), Aug. 2021. [Online].

- Kim, J. Choi, and J. Baek, “Design of an integrated function model for quantifying ethical decisions in AI fighter pilots,” presented at the 2025 Annual Conference of the Institute of Electronics and Information Engineers (IEIE), Jeju, Korea, June 2025.

- A. Hickey, “The GPT Dilemma: Foundation Models and the Shadow of Dual‑Use,” Jul. 2024. [Online].

- D. Helmer *et al*., “Human‑centred test and evaluation of military AI,” Dec. 2024. [Online].

- K. Cools and C. Maathuis, “Trust or Bust: Ensuring Trustworthiness in Autonomous Weapon Systems,” arXiv:2410.10284, Oct. 14, 2024. [Online].

- H. Khlaaf, S. W. Myers, and M. Whittaker, “Mind the Gap: Foundation Models and the Covert Proliferation of Military Intelligence, Surveillance, and Targeting,” Oct. 18, 2024. [Online].

- A. Nalin Tripodi, “Future Warfare and Responsibility Management in the AI‑based Military Decision‑making Process,” J. Armed Forces Media Univ., vol. 14, no. 1, Spring 2023. [Online].

- N. Upreti and J. Ciupa, “Towards Developing Ethical Reasoners: Integrating Probabilistic Reasoning and Decision‑Making for Complex AI Systems,” Feb. 28, 2025. [Online].

- T. Izumo, “Coarse Set Theory for AI Ethics and Decision‑Making: A Mathematical Framework for Granular Evaluations,” Feb. 2025. [Online].

- “Tractable Probabilistic Models for Ethical AI,” in Tractable Probabilistic Models for Ethical AI, Springer, 2022.

- Y. Wang, Y. Wan, and Z. Wang, “Using Experimental Game Theory to Transit Human Values to Ethical AI,” Nov. 2017. [Online].